As a website grows, so do its storage and bandwidth needs, and with this growth comes increased costs. One attractive way to keep a site fast, or even to speed it up and simultaneously cut down on expenses, is to move your large and numerous static files to a cloud-based file storage system like Amazon S3. Hop Studios did this recently for foursquare.org, and learned a lot in the process. So we thought we’d share how we did it.

We built foursquare.org using ExpressionEngine several years ago. The site started with a significant volume of videos, audio files, PDFs and images that were all stored on and served from their local server, and that’s only grown over the years. But as the total volume of files and traffic increased, there was a multiplicative effect. Facing a growing need for more server space, faster load times and a streamlined approach to their site, Foursquare began looking into better hosting options, and came up with Amazon S3.

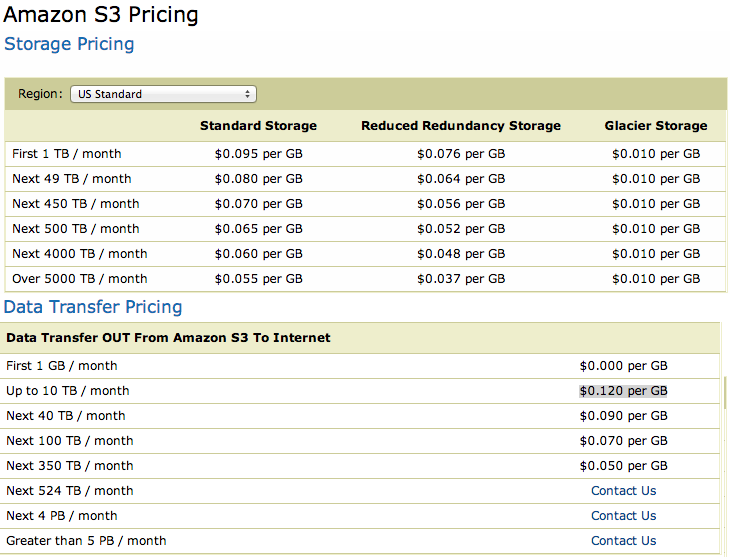

Amazon S3 is a cloud-based storage service run by (obviously) Amazon. It handles static files for data giants such as Twitter, Pinterest and Netflix, so it has the muscle to support the most demanding needs we, or pretty much any company, could have (check out Amazon’s case studies if you still need convincing). Foursquare.org found the flexibility and power appealing. Their bandwidth is cheap and their uptime, while not perfect, is better than any standard Web hosting we have seen. Prices start at $0.095 per GB (one-time fee, not monthly) and $0.120 per GB of bandwidth served—and get cheaper as you get bulkier.

Amazon S3 is a cloud-based storage service run by (obviously) Amazon. It handles static files for data giants such as Twitter, Pinterest and Netflix, so it has the muscle to support the most demanding needs we, or pretty much any company, could have (check out Amazon’s case studies if you still need convincing). Foursquare.org found the flexibility and power appealing. Their bandwidth is cheap and their uptime, while not perfect, is better than any standard Web hosting we have seen. Prices start at $0.095 per GB (one-time fee, not monthly) and $0.120 per GB of bandwidth served—and get cheaper as you get bulkier.

To make the switch from storing their data locally to Amazon’s cloud service with ExpressionEngine took many steps and careful testing along the way. The benefits far outweigh the cost as they are now enjoying a site that faster, more reliable, and best of all the cost of storing with Amazon S3 is actually cheaper than their own local option. So how was it done?

Step 1: Backups

We backed up all of Foursquare.org’s files, their EE installation and their database. An obvious step, but loss of a client’s files is unthinkable and hard to recover from.

Step 2: Install and Configure add-ons

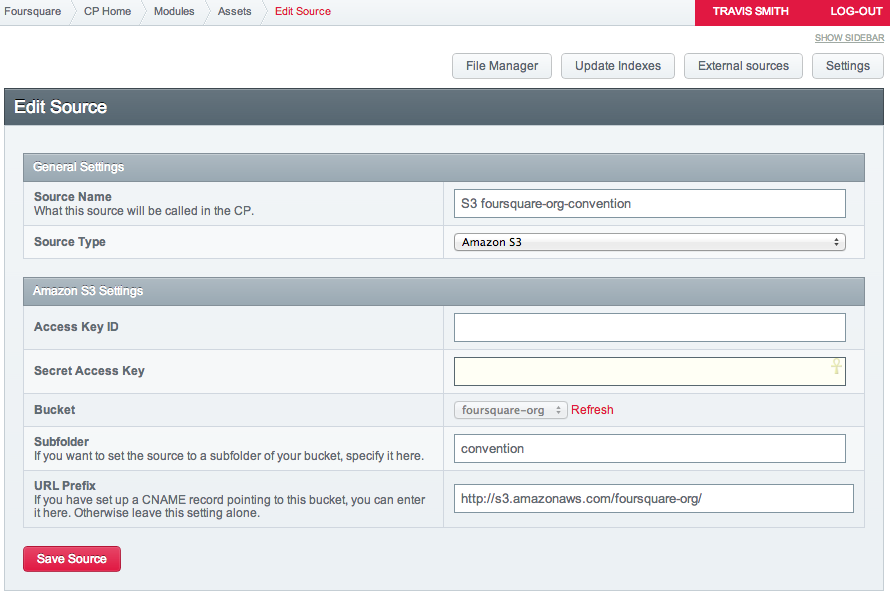

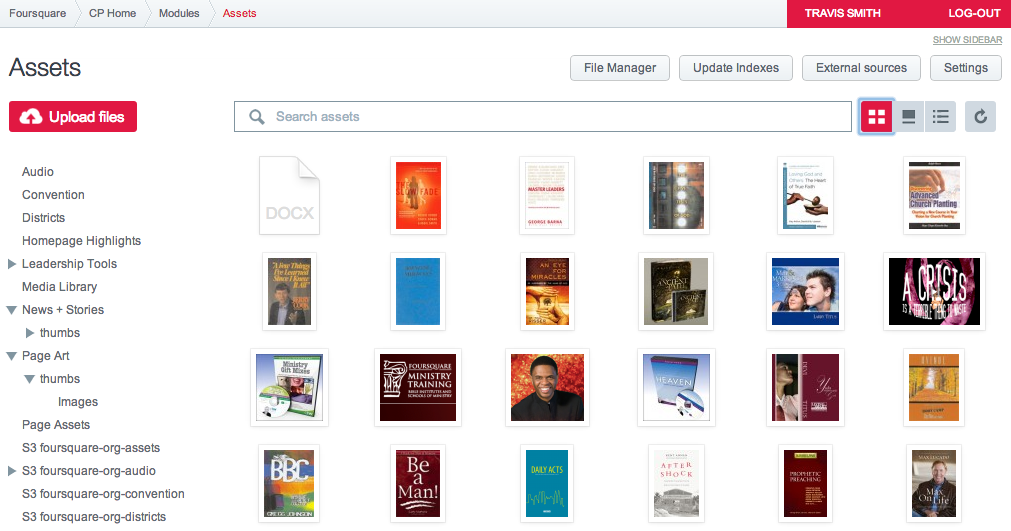

Foursquare.org was already using a third-party file manager called “Assets” from Pixel and Tonic. Assets integrates with S3 directly, and makes moving assets pretty simple. If you haven’t installed Assets, you’ll need to do so, and then convert all “File” fields to “Assets” fields—both regular fields and matrix fields. This may also require changes to your templates to use the “Assets” template tags.

We also installed CE_Img, an image handler from Causing Effect that would handle resizing images; you’ll want to install its extra extension, the Amazon S3 add-on as well.

Step 3: Set up S3

Once Assets was handling our site’s files and running smoothly, we set up an account on S3 and did small tests to make sure everything was serving properly, and that files could be uploaded, moved from local to S3, and back and deleted.

Storage accounts in S3 can be set up in two ways. The first is by creating “buckets”—think file folders, kind of—and use these to store your data.

Each bucket can later be mapped to a subdomain. The second way is to set up a single bucket and use sub-folders within that. Going with the second option let us map to a single domain and overall was the simpler and better route in terms of speed and efficiency, but the choice is yours.

Step 4: Thumbnails and Resized Images

We use images multiple places on the site, and dynamically resize them both for thumbnails and to ensure they don’t break the layout or take too long to download. We use CE_Img, an add-on that takes an image file, shrinks it or crops it, and then saves that modified version so it only needs to be generated once. (ExpressionEngine can do this when you upload files, but it’s not as flexible and dynamic). So now, we needed to configure the CE_Img add-on to save, and serve, images from Amazon S3 instead of from the local server, or else all our migration would be basically for nothing, because we’d just be copying files to S3, then fetching them from there and re-serving them from our own server. Not much point in that.

Serving CE_Img files from S3 is pretty straight-forward, but it’s a necessary step. Causing Effect has clear instructions on modifying the config.php file for this.

For our project, actually, this was a two-step process because we had to switch from using Imgsizer, an older add-on, to CE_Img, which didn’t exist at the time. Out with the old and in with the new!

Step 5: Moving the files

Having switched all the fields to play nice with S3, we could begin the migration process. We started by grabbing all the files in an Assets local directory, thousands in fact, and inside ExpressionEngine dragged these to an S3 bucket. Nope! We found that this caused problems and froze the control panel. By trial and error, we settled on comfortable limit of 30 files per drag—your mileage might vary—and then settled in to watch some Breaking Bad while we clicked and dragged and clicked and dragged and…

Step 6: Updating fields

Finally, the trickiest step—and if you’re following along, you want to set this step up and test it before you move the files, but only do it afterwards. You see, files you pick and store via an Assets fields will update automatically where they point when you move a file from local to S3 (or from one file directory to another locally, too).

However, additional field types in ExpressionEngine might store references to files, and those other field types do not update automatically: text fields, textarea fields, radio buttons… and add-on fields galore: WYGWAM fields were an issue on our site. So we needed a PHP script that would change references to these files. On top of this, if you use a complex field type like Matrix or Grid, these contain sub-fields which can have the problematic textarea and WYGWAM fields inside them.

We wrote a bit of PHP to search textarea and WYGWAM fields and do a search-and-replace to point to Amazon S3 sub-buckets as appropriate. If you use other fields that store references to files, you would have to add those to the list as well. We put this PHP code in a template and ran it after the files were moved to S3. It didn’t take long, but it could be searching through a lot of data and your hosting plan might not be as generous with CPU time, so consider that when running this, and you might have to do it in batches. I suppose we could have written a bigger script that first looked for matching types of fields to update, then updated them—let’s leave that as an exercise for you.

<br />

$EE =& get_instance();</p>

<p>// WYGWAM and textarea fields<br />

$list_of_fields = array(1,31,46,65,109,106,114);<br />

// WYGWAM and textarea matrix fields<br />

$list_of_matrix_cols = array(9,27);<br />

// file directories we’re switching<br />

$URLs_to_replace = array(<br />

‘{filedir_1}’ => ‘http://s3.amazonaws.com/foursquare-org/assets/’,<br />

‘https://hopstudios.com/clientimages/’ => ‘http://s3.amazonaws.com/foursquare-org/audio/’<br />

);</p>

<p>foreach ($list_of_fields as $i => $fieldnum) {<br />

foreach ($URLs_to_replace as $from => $to) {<br />

$sql = "UPDATE exp_channel_data SET field_id_$fieldnum = REPLACE(field_id_$fieldnum," . $EE->db->escape($from) . "," . $EE->db->escape($to) . ") WHERE field_id_$fieldnum != '' "; <br />

$EE->db->query($sql); <br />

}<br />

}</p>

<p>foreach ($list_of_matrix_cols as $i => $colnum) {<br />

foreach ($URLs_to_replace as $from => $to) {<br />

$sql = "UPDATE exp_matrix_data SET col_id_$colnum = REPLACE(col_id_$colnum," . $EE->db->escape($from) . "," . $EE->db->escape($to) . ") WHERE col_id_$colnum != '' "; <br />

$EE->db->query($sql); <br />

}<br />

}<br />

Step 7: .htaccess

We then added the following lines to our .htaccess file, to catch any outside requests to files that didn’t exist anymore, so they’d be properly fetched from Amazon S3 instead of showing a missing file.

<br />

RewriteCond %{REQUEST_URI} /images/assets [OR]<br />

RewriteCond %{REQUEST_URI} /images/audio<br />

RewriteCond %{REQUEST_FILENAME} !-f<br />

RewriteRule ^images/(.*) http://foursquare-org.s3.amazonaws.com/$1 [L]<br />

It simply says: If a file doesn’t exist locally, and it matches the pattern of a file directory we’ve moved (the OR is important for that), go look for it at Amazon.

Step 8: Delete any files remaining

There shouldn’t be any files remaining in your directories if the Assets migration ran properly. So we looked to see if there were any, and there weren’t. Phew. The backed-up files, now that the job was done, were moved off the server and afterwards we hoisted a lovely glass of bubbly beverage in celebration of a job well done. We were finished.

End results? This project freed up 22GB of server space on Foursquare.org’s local server, improved their site’s page load speed noticeably, cut their bandwidth bill and their server’s traffic load overall, and we have confidence that S3 will guarantee the reliable delivery of all their files. And S3 will serve large files pretty much as quickly as a visitor’s pipe will permit, which is nice. We recommend S3 as a storage option to just about anyone who has reached a certain size of site.

Let us know if you have any questions or suggestions of how to improve this next time!

Comments